Real-Time Voice to Sign Language Translation with Amazon Nova 2 Sonic and Pollen Robotics Amazing Hand - Part 1: Frontend and Voice Processing

This is Part 1 of a 3-part series covering a real-time voice-to-sign-language translation system. The complete solution spans three separate repositories, each responsible for a distinct layer of the system:

- This post (Part 1) - Frontend and Voice Processing — The React web app that captures speech, streams it to Amazon Nova 2 Sonic on Bedrock, publishes cleaned sentence text via MQTT, and renders a real-time 3D hand visualisation

- Part 2 - Cloud Infrastructure (

cdk-iot-amazing-hand-streaming) — The AWS CDK stack that routes IoT Core messages through Lambda to AppSync, enabling real-time GraphQL subscriptions between the edge device and the frontend - Part 3 - Edge AI Agent (

strands-agents-amazing-hands) — The Strands Agent powered by Amazon Nova 2 Lite running on an NVIDIA Jetson that receives MQTT sentence text, translates it to ASL servo commands, drives the Pollen Robotics Amazing Hand for fingerspelling, and streams video and state back

In this post, I focus on how speech enters the system, how Amazon Nova 2 Sonic processes and cleans up the spoken input, and how the frontend publishes cleaned sentence text over MQTT — setting the stage for Parts 2 and 3.

The key idea is that Nova 2 Sonic is not used as a chatbot here — it is configured as a dumb speech-to-text relay pipe that cleans up grammar, removes filler words like "um" and "uh", translates non-English speech to English, and forwards the cleaned text via a forced tool invocation (send_text) on every single utterance. The frontend then publishes the cleaned sentence text to AWS IoT Core over MQTT for the edge device to translate into ASL servo commands.

Goals

- Capture speech in the browser and stream it to Amazon Nova 2 Sonic via bidirectional streaming — no backend servers required

- Use Nova 2 Sonic's forced tool use (

send_text) withtoolChoice: { any: {} }to relay cleaned text on every utterance, not as a conversational chatbot - Publish cleaned sentence text to AWS IoT Core over MQTT for the edge device to translate into ASL servo commands

- Subscribe to real-time hand state updates via GraphQL (AppSync) and synchronise a 3D Three.js hand animation with the physical hand

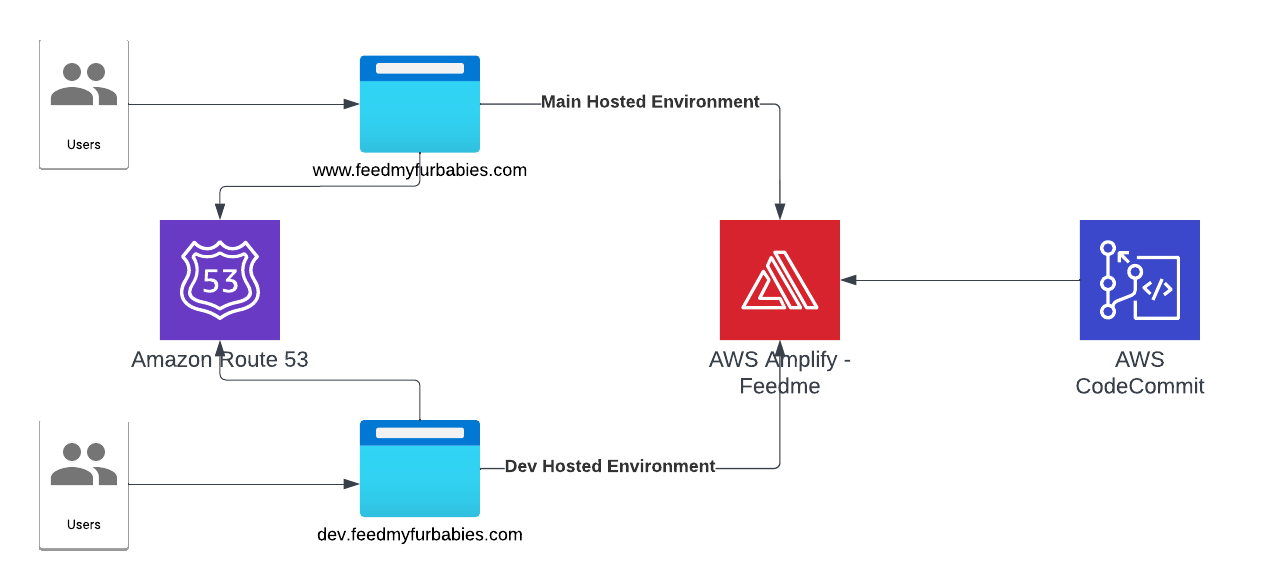

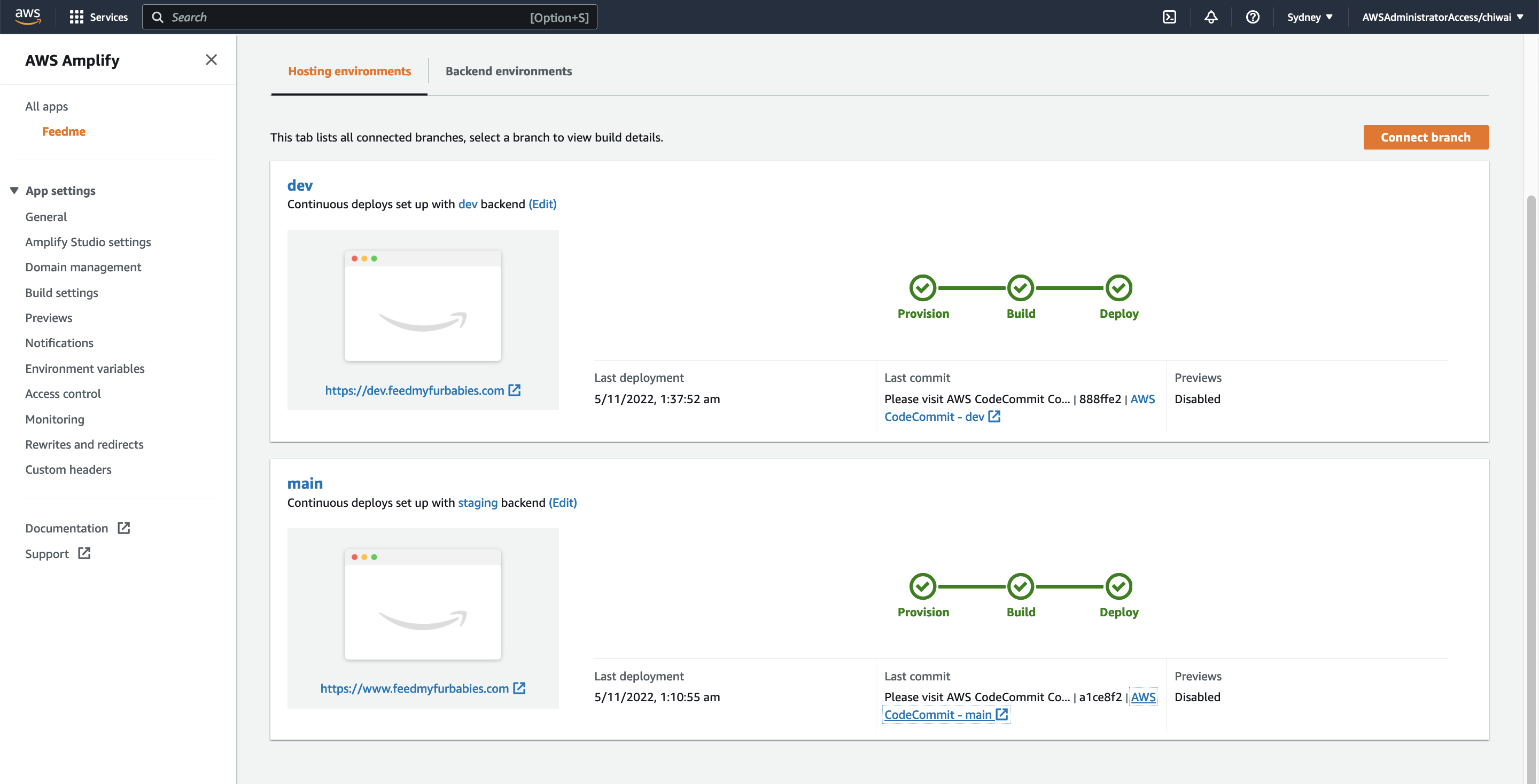

- Use AWS Amplify Gen 2 for infrastructure-as-code backend definition in TypeScript (Cognito, AppSync, IAM policies)

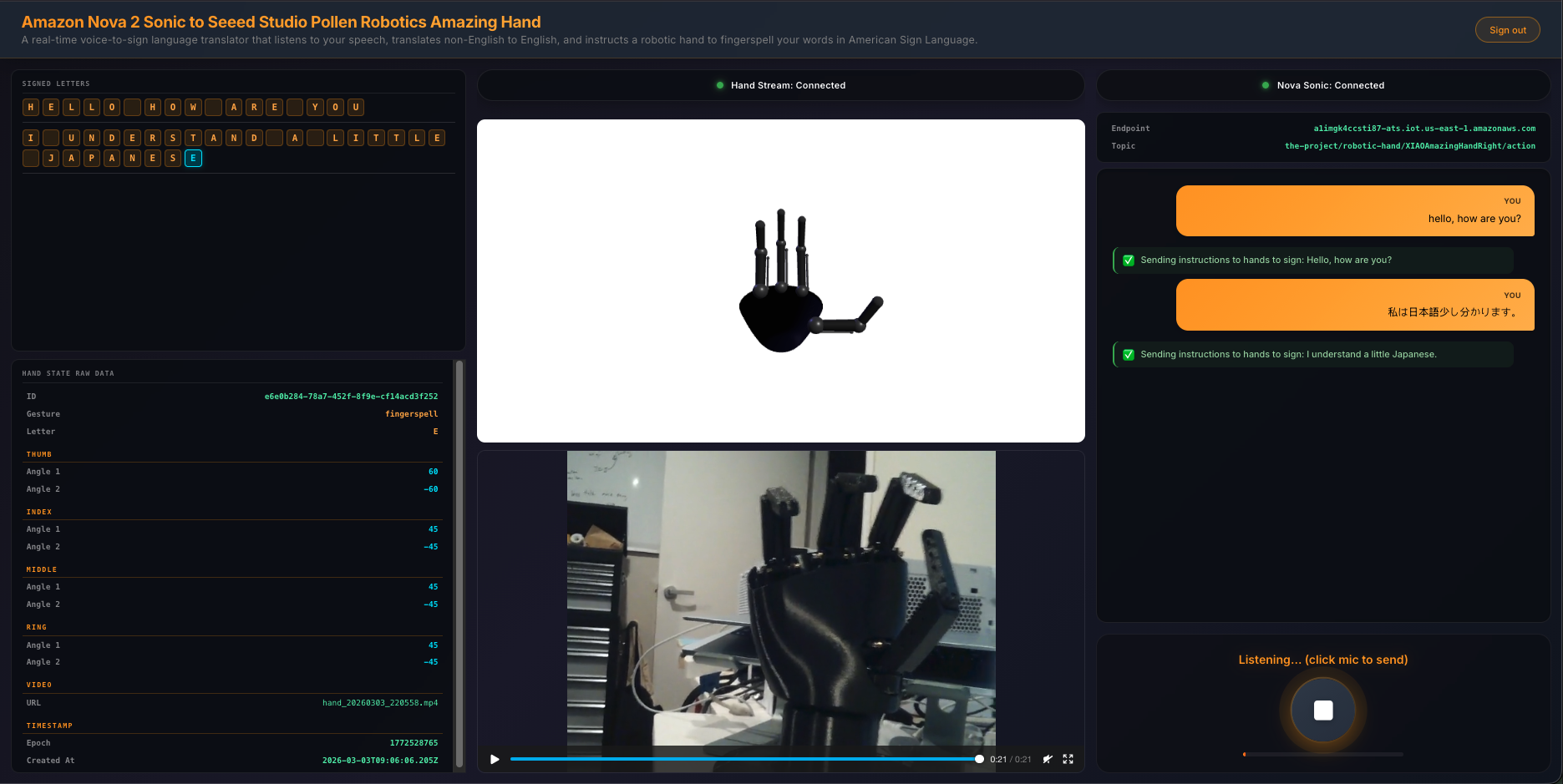

- Display a 3-column UI with signed letter history, 3D hand animation with video feed, and live transcript with microphone controls

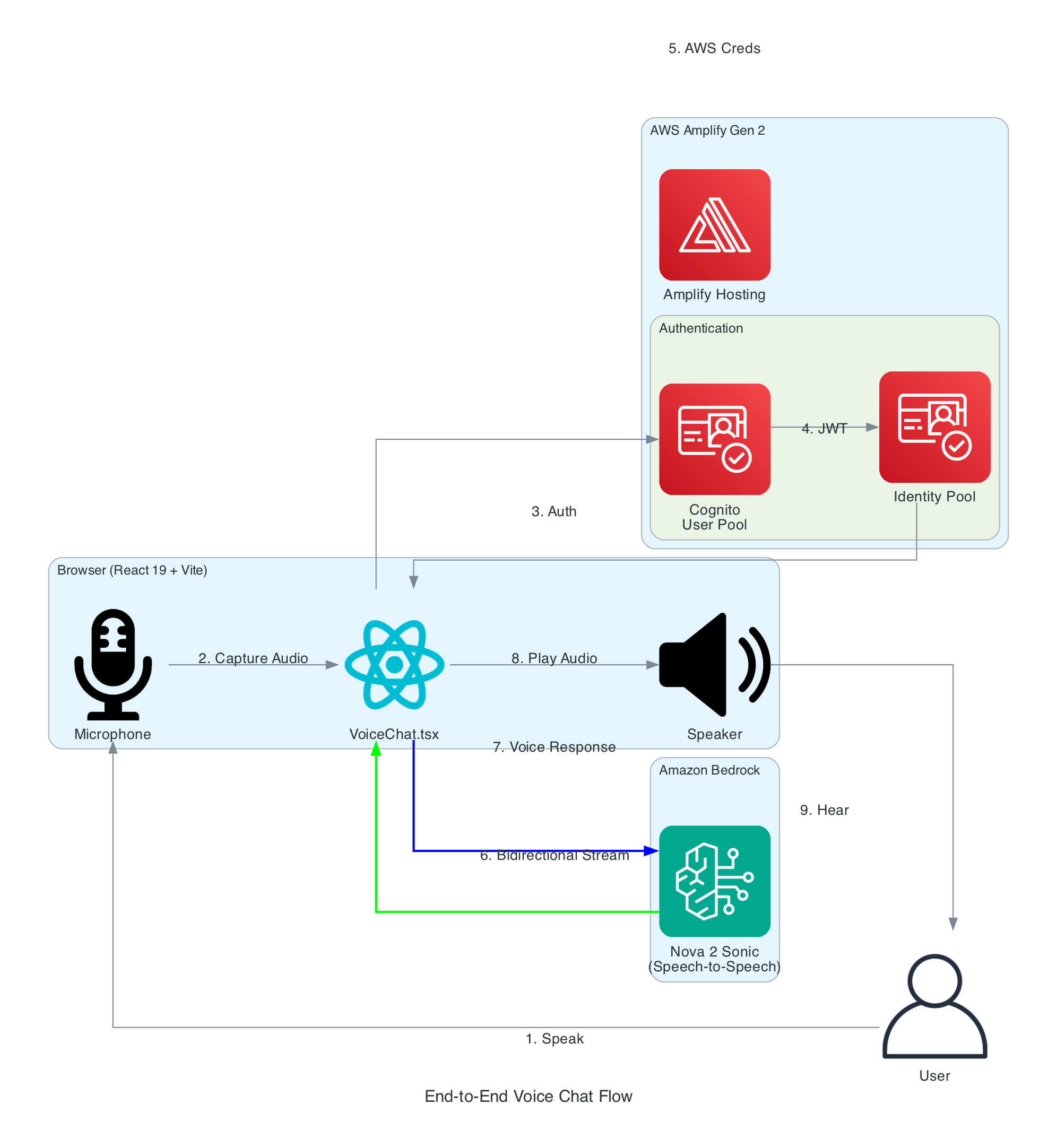

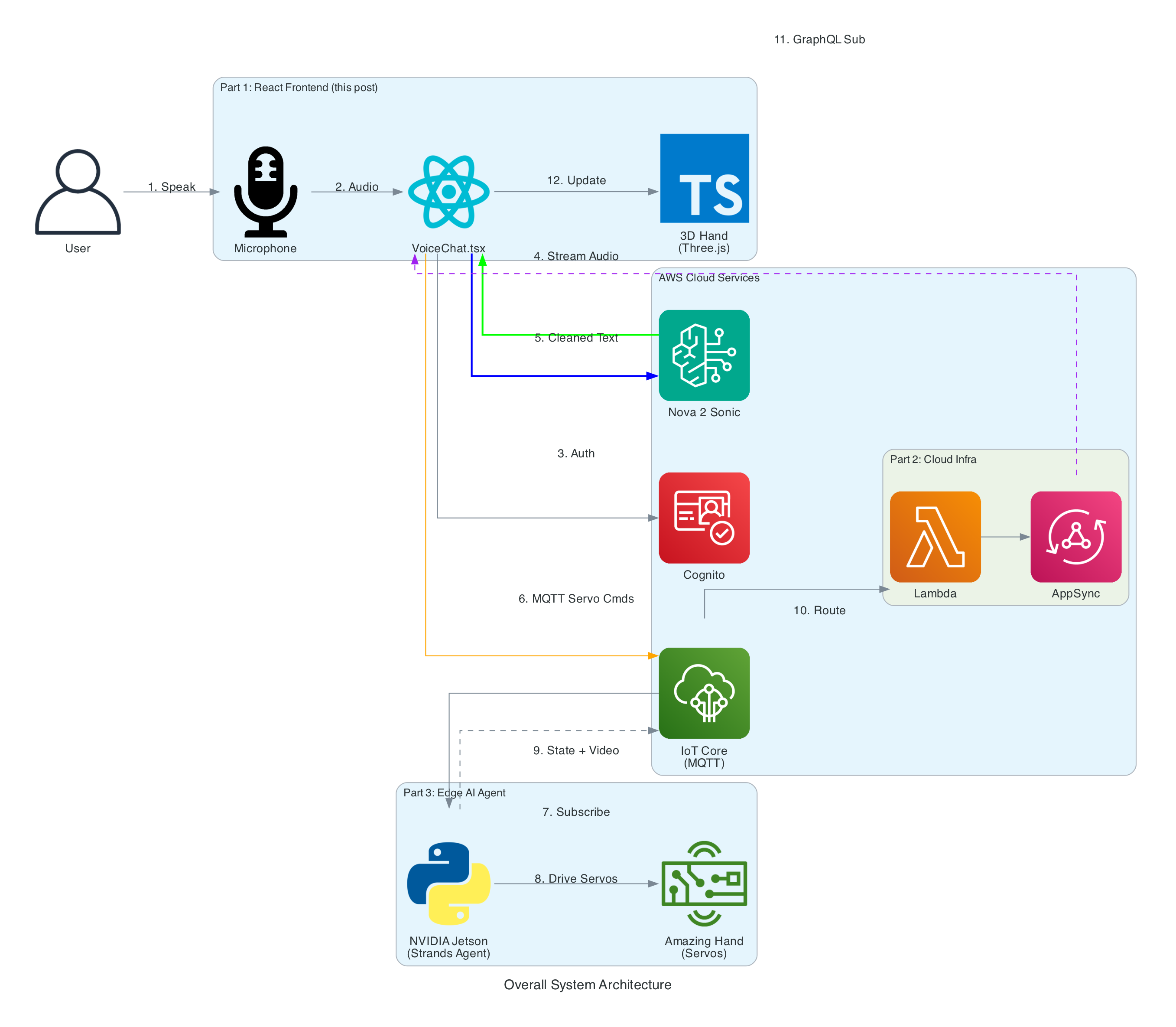

The Overall System

The end-to-end system takes spoken words from a browser microphone all the way through to physical ASL fingerspelling on an Amazing Hand — an open-source robotic hand designed by Pollen Robotics and manufactured by Seeed Studio — passing through cloud AI, IoT messaging, and an edge AI agent along the way.

System Components:

- React Frontend (this post) - Captures speech, streams to Bedrock, publishes cleaned sentence text to MQTT, renders 3D hand animation synchronised with the physical hand via GraphQL subscriptions

- Cloud Infrastructure (Part 2) - AWS CDK stack with IoT Core rules that route MQTT messages through Lambda to AppSync, enabling real-time GraphQL subscriptions between the edge device and the frontend

- Edge AI Agent (Part 3) - Strands Agent powered by Amazon Nova 2 Lite on an NVIDIA Jetson that receives MQTT sentence text, translates it to ASL servo commands, drives the Amazing Hand for fingerspelling letter by letter, records video, and publishes hand state back via IoT Core

Interactive Sequence Diagram

End-to-End Voice to Sign Language Flow

From user speech to ASL fingerspelling on the Amazing Hand

Architecture

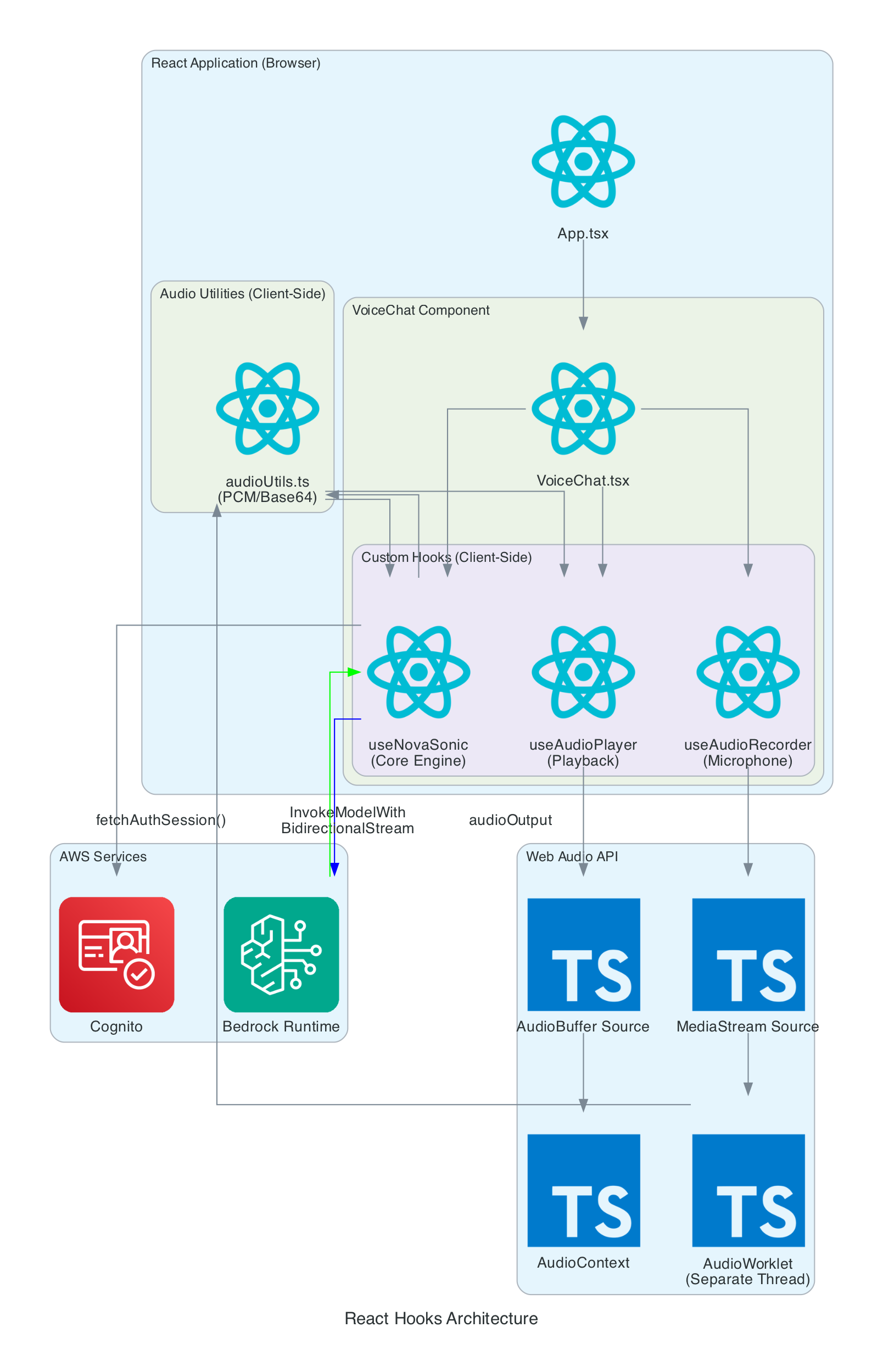

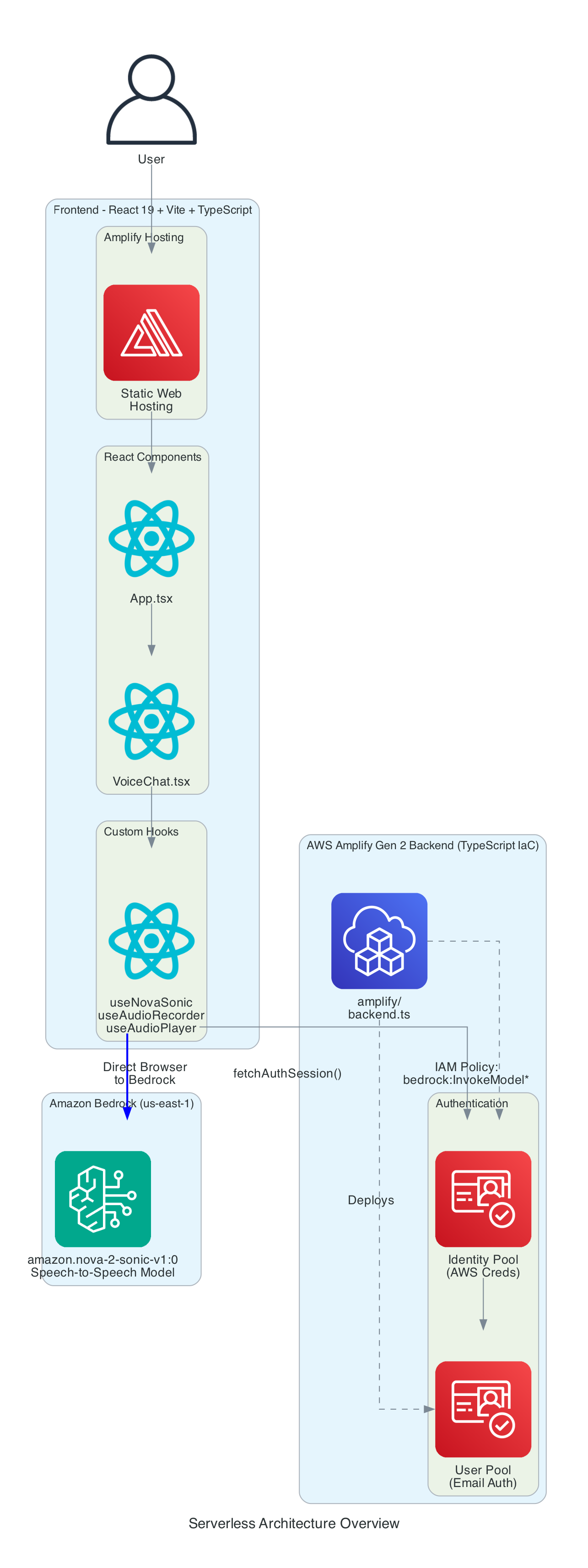

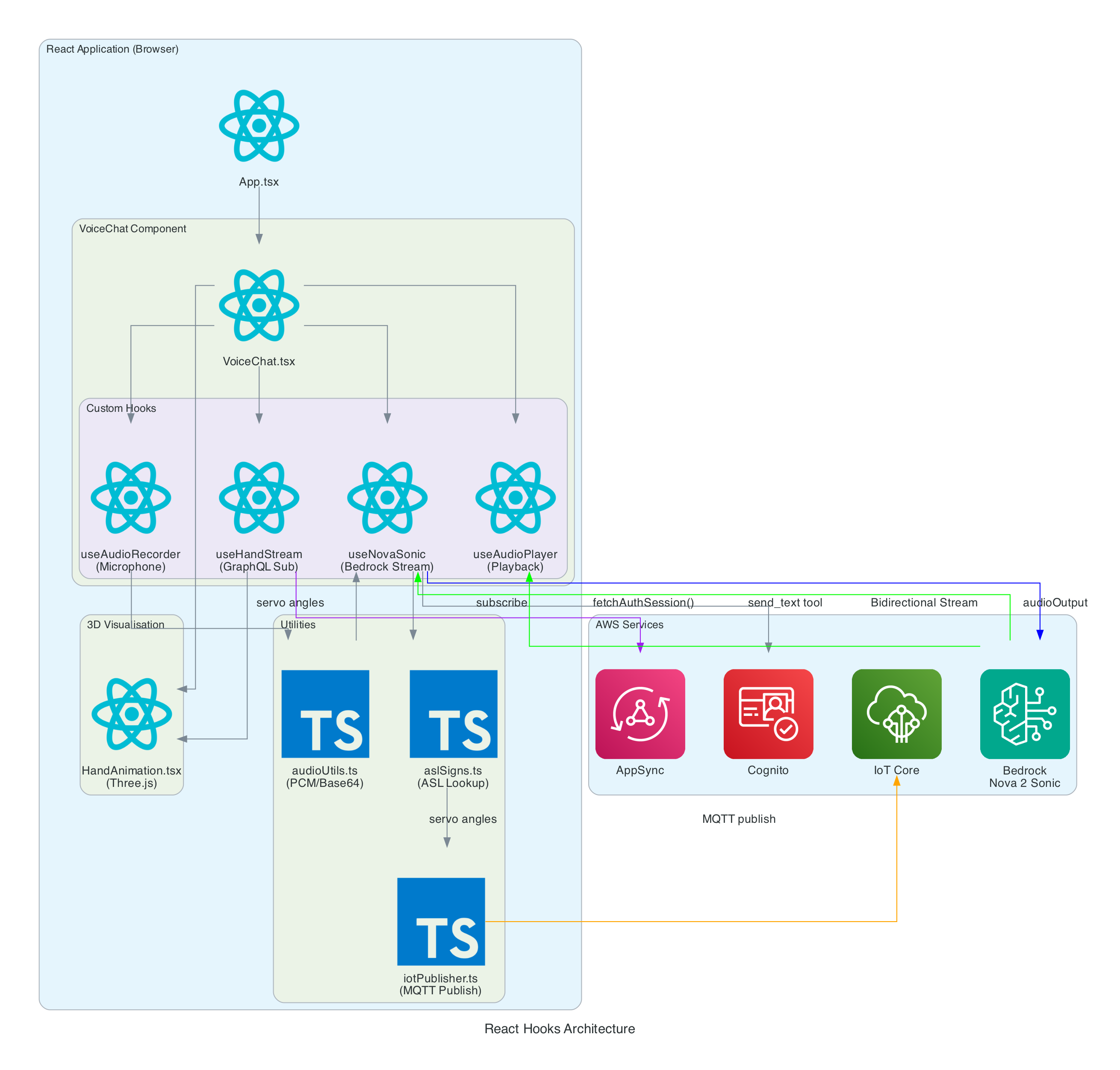

The frontend is built with React 19, Vite 7, and TypeScript 5.9. The application is structured around a main VoiceChat.tsx component that orchestrates four custom hooks, three utility modules, and a Three.js-based hand animation component.

React Hooks Architecture

Components:

- VoiceChat.tsx - Main UI component with a 3-column responsive layout. Coordinates all hooks, renders the transcript feed, microphone controls, signed letter history, hand state data grid, video feed, and 3D animation. Collapses to a single column on screens under 1100px

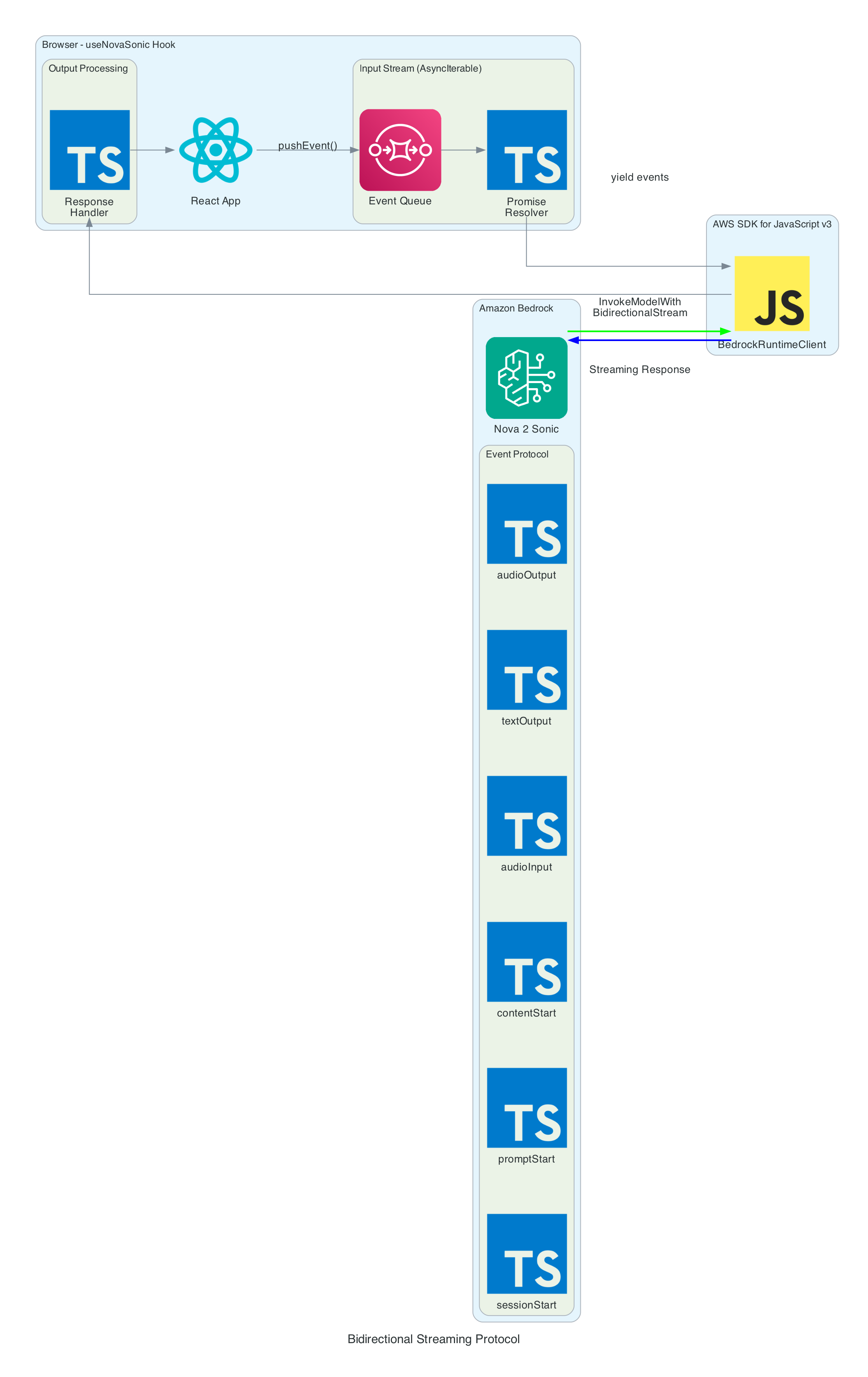

- useNovaSonic - Core hook managing the Bedrock bidirectional stream with

InvokeModelWithBidirectionalStreamCommand. Handles authentication via Cognito, the Nova 2 Sonic event protocol (session/prompt/content lifecycle), the async generator input stream with backpressure, andsend_texttool use responses. The tool is configured withtoolChoice: { any: {} }to force tool invocation on every utterance - useAudioRecorder - Captures microphone input using an inline AudioWorklet running in a separate thread. Accumulates 2048 samples per buffer, resamples from the device sample rate (typically 48kHz) to 16kHz, converts Float32 to PCM16, and Base64 encodes for transmission

- useAudioPlayer - Provides audio playback capability (FIFO queue of AudioBuffers at 24kHz). In the current implementation, Nova 2 Sonic's audio output is intentionally discarded since only the cleaned text via tool use is needed — the hook is available but not actively fed audio data

- useHandStream - Subscribes to AppSync GraphQL

onCreateHandStatesubscription filtered by device name. Fetches the last 20 hand states on mount and maintains a real-time list of 8 servo angles (thumb, index, middle, ring — each with two joint angles), letters, and video URLs - iotPublisher.ts - Publishes MQTT messages to the topic

the-project/robotic-hand/XIAOAmazingHandRight/action. Publishes cleaned sentence text as{ id, sentence, ts }payloads and handles IoT policy attachment to the Cognito identity - HandAnimation.tsx - Procedurally generated 3D robotic hand using Three.js with no external 3D models. The palm is built with LatheGeometry (curved cup shape), and each finger has a dual-joint rig (proximal + distal) with synchronised linkage. Uses WebGL rendering with PCFSoftShadowMap shadows, OrbitControls, and industrial-style materials with metalness/roughness

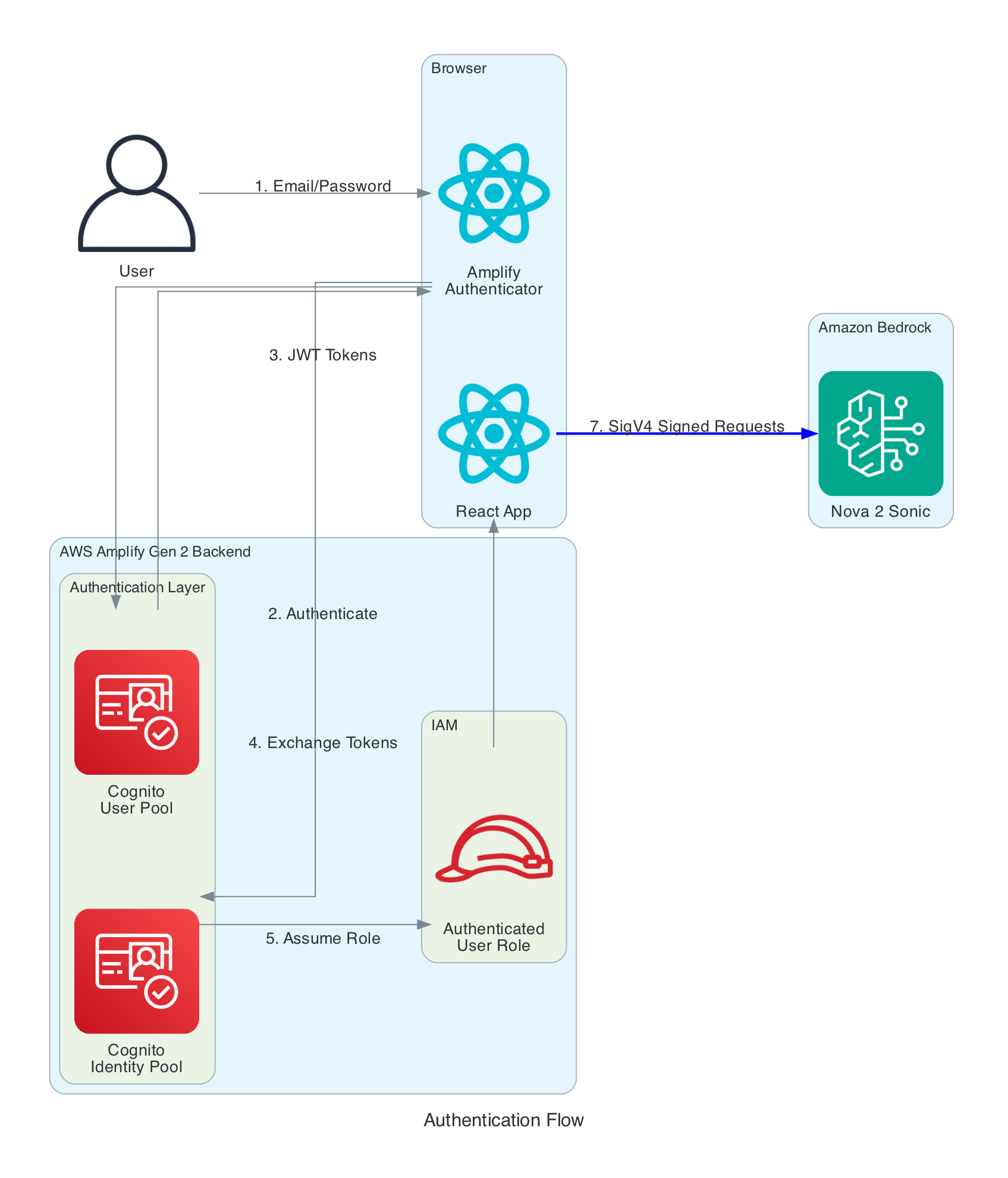

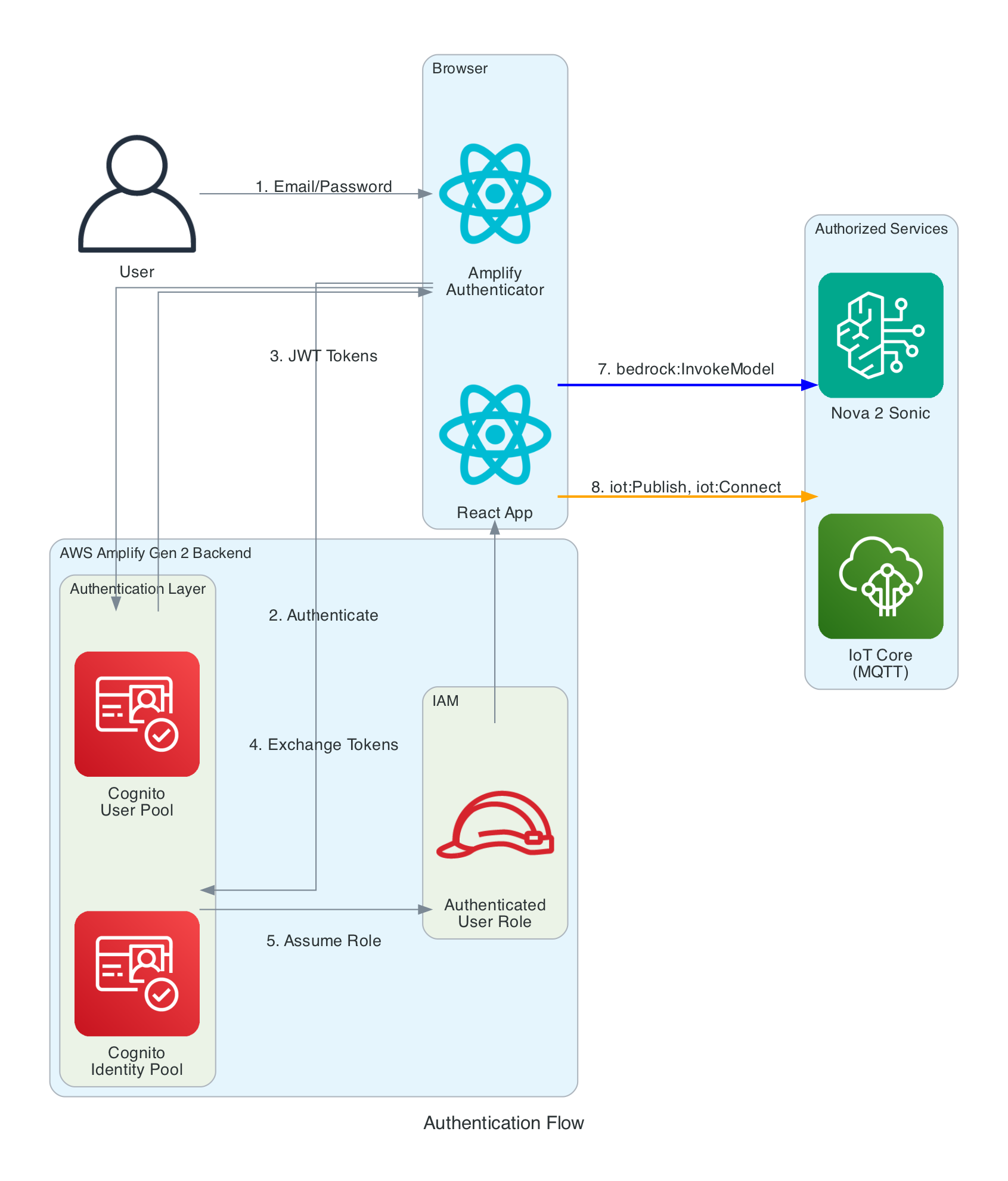

Authentication Flow

The frontend needs temporary AWS credentials to call both Bedrock (for Nova 2 Sonic streaming) and IoT Core (for MQTT publishing). No long-term credentials are stored in the browser.

Authentication Layers:

- Cognito User Pool - Handles user registration and login with email/password. Configured via Amplify Gen 2

defineAuthwithpreferredUsernameas an optional attribute - Cognito Identity Pool - Exchanges JWT tokens from the User Pool for temporary AWS credentials (access key, secret key, session token). Credentials are automatically refreshed by the Amplify SDK before expiration

- IAM Role - The authenticated user role grants two sets of permissions:

bedrock:InvokeModelandbedrock:InvokeModelWithResponseStreamscoped toamazon.nova-2-sonic-v1:0in us-east-1, andiot:Publish,iot:Connect,iot:DescribeEndpoint, andiot:AttachPolicyfor IoT Core MQTT access. An IoT Core policy (RoboticHandPolicy) is also attached to the Cognito identity at runtime to authorise MQTT publishing to thethe-project/robotic-hand/*topic pattern

How it works

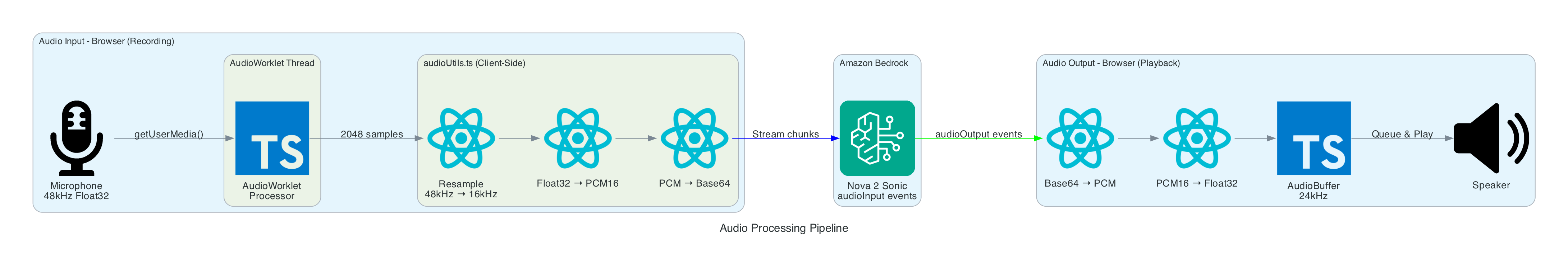

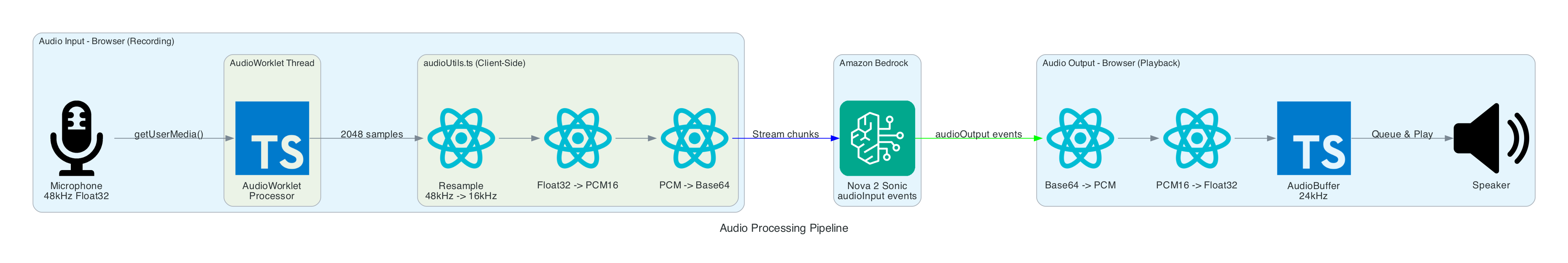

Audio Capture and Processing

The browser captures audio from the microphone using the Web Audio API and an AudioWorklet running in a separate thread. The AudioWorklet avoids main-thread blocking and processes audio in real-time with echo cancellation and noise suppression enabled.

Input Processing (Recording):

- Microphone - Browser calls

getUserMedia()to capture audio at the device's native sample rate (typically 48kHz) with mono channel, echo cancellation, and noise suppression enabled - AudioWorklet - An inline

AudioCaptureProcessor(loaded as a Blob URL to avoid CORS issues) runs in a separate thread. It accumulates samples in a buffer and posts aFloat32Arraymessage to the main thread every 2048 samples - Resample - Linear interpolation resampling converts from 48kHz to 16kHz (Nova 2 Sonic's required input rate). The ratio is calculated dynamically from the actual device sample rate

- Float32 to PCM16 - Floating point samples in the range [-1, 1] are converted to 16-bit signed integers. Negative values are multiplied by

0x8000and positive values by0x7FFF - Base64 Encode - The binary PCM data is encoded to Base64 text for JSON transmission to Bedrock via a custom

uint8ArrayToBase64()utility that iterates bytes into a binary string and then callsbtoa()

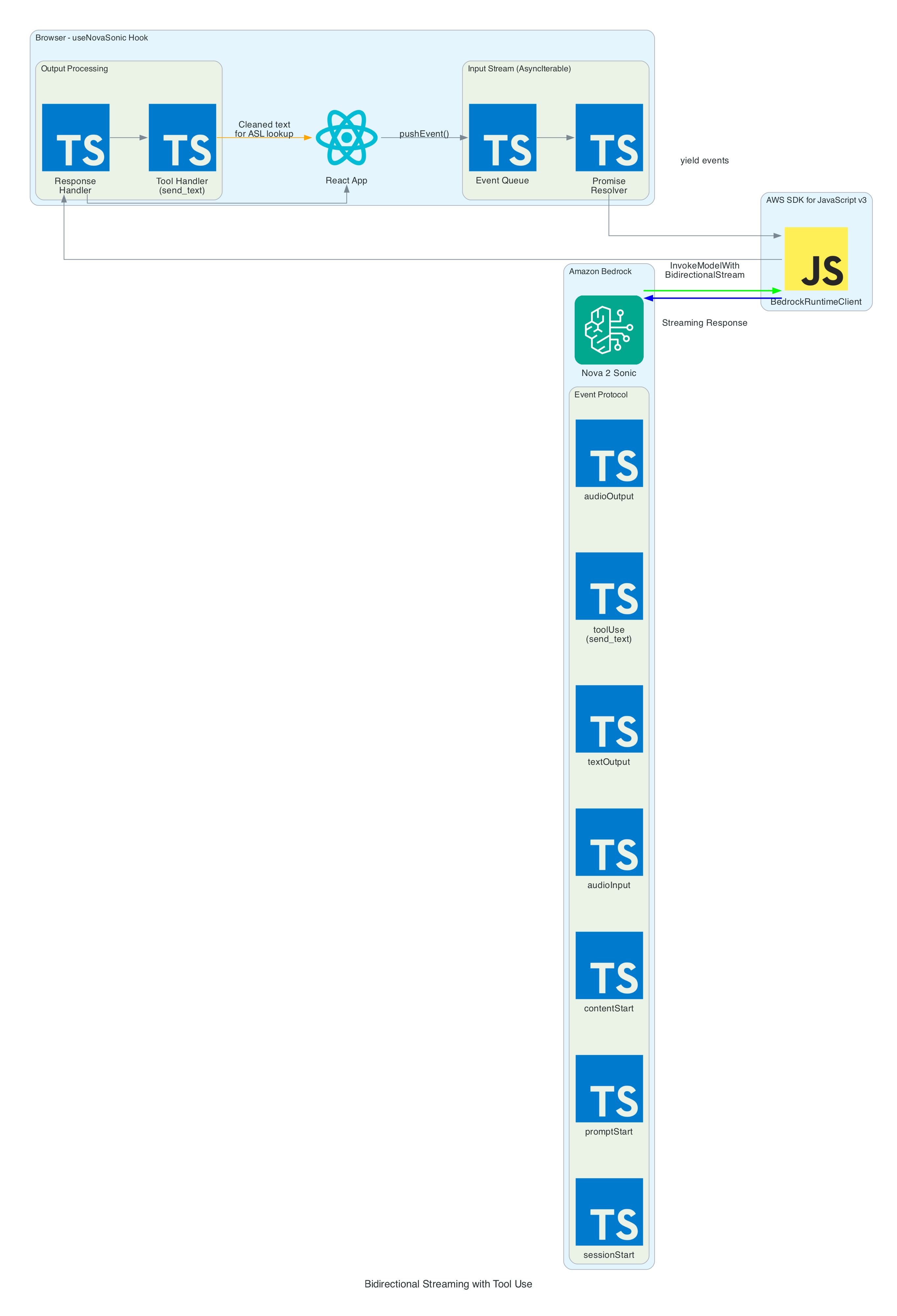

Amazon Nova 2 Sonic Bidirectional Streaming

The heart of the system is the bidirectional stream to Amazon Nova 2 Sonic using InvokeModelWithBidirectionalStreamCommand. Nova 2 Sonic is configured not as a chatbot, but as a speech relay that cleans up input and forwards it via forced tool use.

Input Events (sent to Bedrock):

- sessionStart - Initialises the session with inference configuration:

maxTokens: 1024,topP: 0.9,temperature: 0.7 - promptStart - Configures audio output format:

audio/lpcmat 24kHz, 16-bit, mono, voicematthew, Base64 encoding. Also defines thesend_texttool withtoolChoice: { any: {} }to force tool invocation on every utterance - contentStart (TEXT) - Sends the system prompt that instructs Nova 2 Sonic to act as a "dumb speech-to-text relay pipe" — clean up grammar, remove filler words, translate non-English to English, call

send_textwith the cleaned text, then respond with only "Sent" - contentStart (AUDIO) - Marks the beginning of audio input content

- audioInput - Streams Base64-encoded 16kHz PCM audio chunks in real-time as the user speaks

- contentEnd / promptEnd / sessionEnd - Lifecycle events to terminate content blocks, prompts, and sessions

Output Events (received from Bedrock):

- textOutput - Returns transcribed user speech and the generated AI response text ("Sent")

- toolUse - The

send_texttool invocation containing the cleaned text in{ sentence: "..." }format. This is the primary output — the frontend publishes the sentence to MQTT for the edge device to translate into ASL servo commands - audioOutput - Synthesised voice response as Base64-encoded 24kHz PCM. In the current implementation, audio output is intentionally discarded since only the cleaned text via tool use is needed

Tool Use — send_text:

- The tool is defined with

toolChoice: { any: {} }, which forces Nova 2 Sonic to call it on every single utterance without exception - The tool accepts a single

sentenceparameter — the cleaned-up, well-formed sentence - When the tool invocation arrives, the frontend extracts the sentence and publishes it as

{ id, sentence, ts }to IoT Core via MQTT usingpublishSentence(). The edge device then translates the sentence into ASL servo commands - A JSON tool result (

{ "status": "success", "sentence": "..." }) is sent back to Nova 2 Sonic to complete the tool use cycle

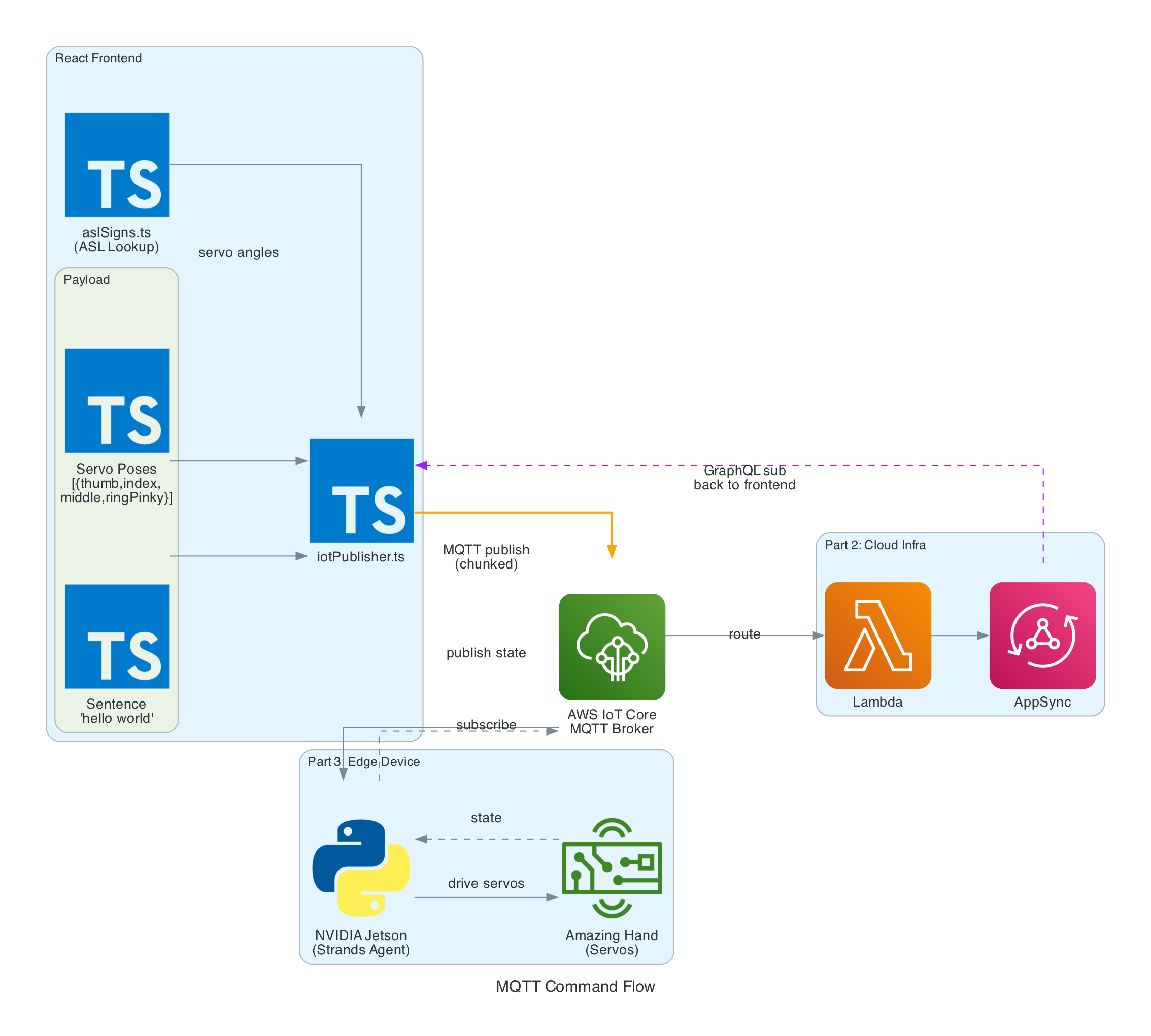

Publishing Sentences via MQTT

Once the cleaned sentence is extracted from the send_text tool invocation, iotPublisher.ts publishes it to the MQTT topic the-project/robotic-hand/XIAOAmazingHandRight/action via AWS IoT Core.

The payload is a simple JSON object containing:

- id - A UUID for the message

- sentence - The cleaned sentence text from Nova 2 Sonic

- ts - Unix timestamp in seconds

The edge device (covered in Part 3) receives this sentence and is responsible for translating it into ASL servo commands and driving the physical hand.

Interactive Sequence Diagram

MQTT Command Pipeline

From Nova 2 Sonic text output to IoT Core sentence publish

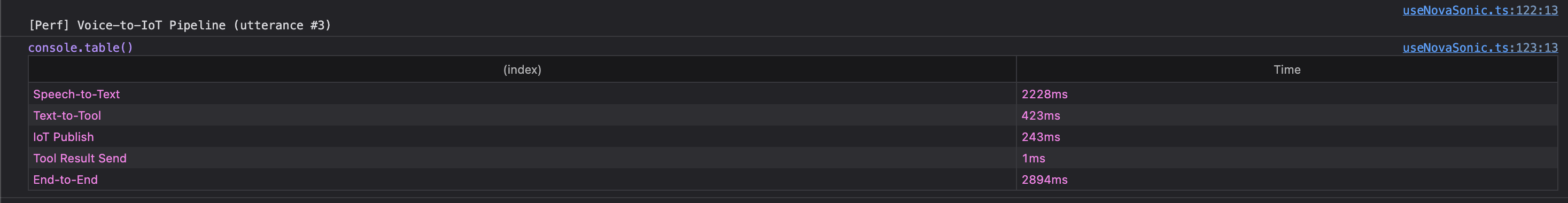

The browser console logs the performance breakdown for each utterance through the voice-to-IoT pipeline. In this example, the end-to-end time from speech detection to IoT publish is approximately 2.9 seconds — with the majority spent on Speech-to-Text (2228ms) as Nova 2 Sonic processes the audio, followed by Text-to-Tool extraction (423ms) and IoT Publish (243ms):

Real-Time Hand State via GraphQL Subscription

The frontend subscribes to AppSync's onCreateHandState GraphQL subscription to receive real-time updates from the edge device. Each update includes the device name, current letter being signed, all 8 servo angles (thumb, index, middle, ring — each with two joint angles), a timestamp, and an optional video URL.

On mount, the hook fetches the last 20 hand states to populate the UI immediately. New states arrive in real-time as the edge device publishes them back through IoT Core → Lambda → AppSync. The data is displayed in both the signed letter history panel and the raw hand state data grid.

3D Hand Visualisation

The HandAnimation.tsx component renders a procedurally generated 3D robotic hand using Three.js — no external 3D models are loaded. The entire hand is built from code:

- The palm uses

LatheGeometryto create a curved cup shape that tapers from a narrow wrist (radius 0.18) to wide knuckles (radius 0.56) - Each finger has a dual-joint rig with proximal and distal segments, knuckle joints, linkage bars, and fingertips. The thumb is mounted on the side of the palm and rotates on the Z-axis, while the index, middle, and ring fingers are mounted on the front rim and rotate on the X-axis

- The distal joint automatically follows the proximal joint at 50% of its angle, simulating a synchronised linkage mechanism

- Materials use industrial-style metalness/roughness: dark gray frame (0x2a2a2a), light gray joints (0x888888), and darker gray tips (0x555555)

- The scene includes PCFSoftShadowMap shadows, ambient lighting (0.8), directional light (1.0), and a fill light (0.4), with OrbitControls for interactive zoom and rotation

Servo angle updates from the GraphQL subscription drive the finger rotations in real-time, keeping the 3D animation synchronised with the physical Amazing Hand.

Audio Playback

The useAudioPlayer hook provides a FIFO queue-based audio playback capability for Web Audio AudioBuffer objects at 24kHz. However, in the current implementation, Nova 2 Sonic's audio output is intentionally discarded — the onAudioOutput callback is set to a no-op since only the cleaned text via the send_text tool use is needed to drive the MQTT pipeline. The hook remains available for future use if audio feedback is desired.

Technical Challenges & Solutions

Challenge 1: AudioWorklet CORS Issues

Problem: Loading an AudioWorklet processor from an external JavaScript file fails with CORS errors on some deployments, particularly when using Amplify Hosting.

Solution: Inline the AudioWorklet code as a Blob URL. The processor code is defined as a string, converted to a Blob with type application/javascript, and loaded via URL.createObjectURL(). The object URL is revoked after the module is added:

const blob = new Blob([audioWorkletCode], { type: 'application/javascript' });

const workletUrl = URL.createObjectURL(blob);

await audioContext.audioWorklet.addModule(workletUrl);

URL.revokeObjectURL(workletUrl);

Challenge 2: Forcing Tool Use on Every Utterance

Problem: Nova 2 Sonic is a conversational model by default — it wants to chat and respond naturally. But in this system, it needs to act as a pure relay, forwarding every single utterance as cleaned text without adding commentary or refusing any messages.

Solution: A combination of system prompt engineering and forced tool use. The system prompt explicitly instructs Nova 2 Sonic to act as a "dumb speech-to-text relay pipe" and never add commentary. The send_text tool is configured with toolChoice: { any: {} }, which forces the model to invoke a tool on every response. After calling the tool, it is instructed to only respond with "Sent".

Challenge 3: Keeping MQTT Payloads Simple

Problem: The system needs to transmit the user's intent from the frontend to the edge device reliably via IoT Core MQTT.

Solution: Rather than translating text to servo commands on the frontend (which would require large payloads with many servo poses), the frontend publishes only the cleaned sentence text as a compact { id, sentence, ts } JSON payload. The edge device is responsible for translating the sentence into ASL servo commands, keeping the MQTT messages small and the frontend simple.

Getting Started

GitHub Repository: https://github.com/chiwaichan/amplify-react-nova-sonic-voice-chat-amazing-hand

Prerequisites

- Node.js 18+

- AWS Account with Amazon Nova 2 Sonic model access enabled in Bedrock (us-east-1 region)

- AWS CLI configured with credentials

Deployment Steps

-

Enable Nova 2 Sonic in Bedrock Console (us-east-1 region)

-

Clone and Install:

git clone https://github.com/chiwaichan/amplify-react-nova-sonic-voice-chat-amazing-hand.git

cd amplify-react-nova-sonic-voice-chat-amazing-hand

npm install

- Start Amplify Sandbox:

npx ampx sandbox

- Run Development Server:

npm run dev

- Open Application:

Navigate to

http://localhost:5173, create an account, and start talking. Note that the full system requires Parts 2 and 3 to be deployed for the physical hand to respond — but the frontend will still capture speech, process it through Nova 2 Sonic, and display the 3D hand animation independently.

What's Next

In Part 2, I will cover the cloud infrastructure layer — the AWS CDK stack (cdk-iot-amazing-hand-streaming) that routes IoT Core MQTT messages through Lambda to AppSync. This is the bridge that enables real-time GraphQL subscriptions, allowing the frontend to receive hand state updates from the edge device as they happen.

In Part 3, I will cover the edge AI agent (strands-agents-amazing-hands) — a Strands Agent powered by Amazon Nova 2 Lite running on an NVIDIA Jetson that subscribes to the MQTT sentence text published by this frontend, translates them into physical servo movements on the Pollen Robotics Amazing Hand for ASL fingerspelling, records video of the hand in action, and publishes state back through IoT Core.

Summary

This post covered the frontend and voice processing layer of a real-time voice-to-sign-language translation system:

- Amazon Nova 2 Sonic is used not as a chatbot but as a speech relay — configured via system prompt and

toolChoice: { any: {} }forcedsend_texttool use to clean up grammar, remove filler words, translate to English, and forward every utterance as text - Audio pipeline captures at 48kHz via AudioWorklet, resamples to 16kHz, converts to PCM16 Base64 for Bedrock input. Nova 2 Sonic's audio output is intentionally discarded since only the cleaned text is needed

- MQTT publishing sends cleaned sentence text as

{ id, sentence, ts }to AWS IoT Core for the edge device to translate into ASL servo commands - Real-time feedback via GraphQL subscriptions keeps the 3D Three.js hand animation synchronised with the physical Amazing Hand using 8 servo angles (thumb, index, middle, ring — each with two joints)

- Fully serverless frontend using AWS Amplify Gen 2 with Cognito authentication, no backend servers — direct browser-to-Bedrock and browser-to-IoT Core communication