Voice to Robotics: Fingerspelling ASL

· 3 min read

Fingerspelling American Sign Language

Nova 2 Sonic · Bedrock · IoT Core · AppSync · Strands

Chiwai Chan — AWS User Group Wellington meet-up 29 April 2026

Who am I?

Tinkerer · Cloud · IoT · Robotics · Generative AI

https://chiwaichan.co.nz https://x.com/chiwaichanconz https://github.com/chiwaichan

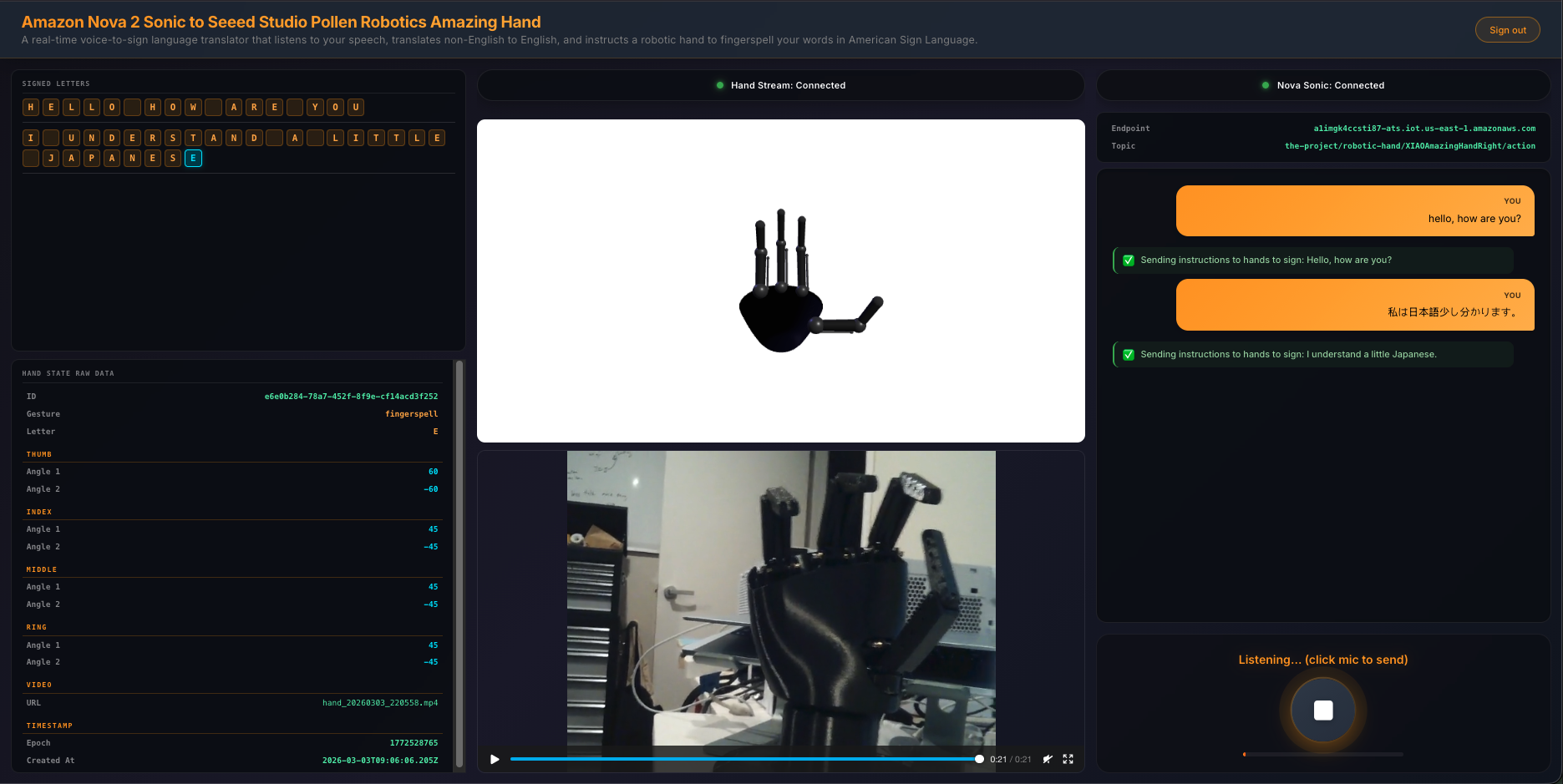

What are we building?

- Speak into a browser microphone

- Cloud cleans + relays the text

- An edge agent on an NVIDIA Jetson translates it

- A robotic hand fingerspells it in ASL — in real time

Voice → Cloud → Edge AI → Robot hand

Why fingerspelling?

- ASL fingerspelling is a constrained, well-defined target

- One letter ≈ one hand pose — perfect for a 4-finger robotic hand

- Builds a foundation for richer signs later

- Real accessibility use cases

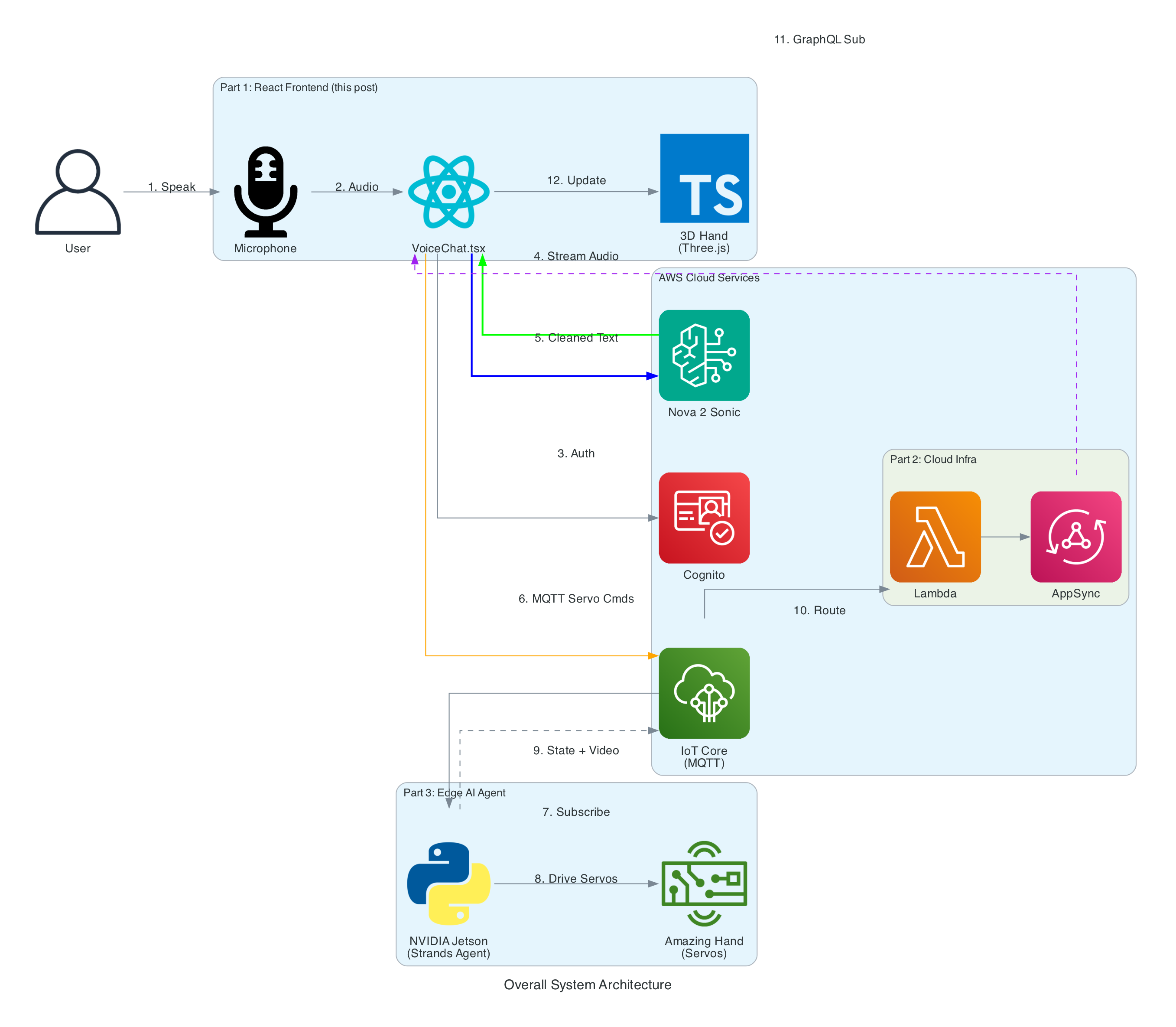

Voice in: Nova 2 Sonic as a "dumb relay"

Amazon Nova 2 Sonic on Amazon Bedrock.

- Not used as a chatbot

- Forced tool use via Bedrock's tool API:

toolChoice: { any: {} } - Every utterance fires

send_textwith cleaned-up text - Removes filler ("um", "uh"), fixes grammar

- Translates non-English speech to English

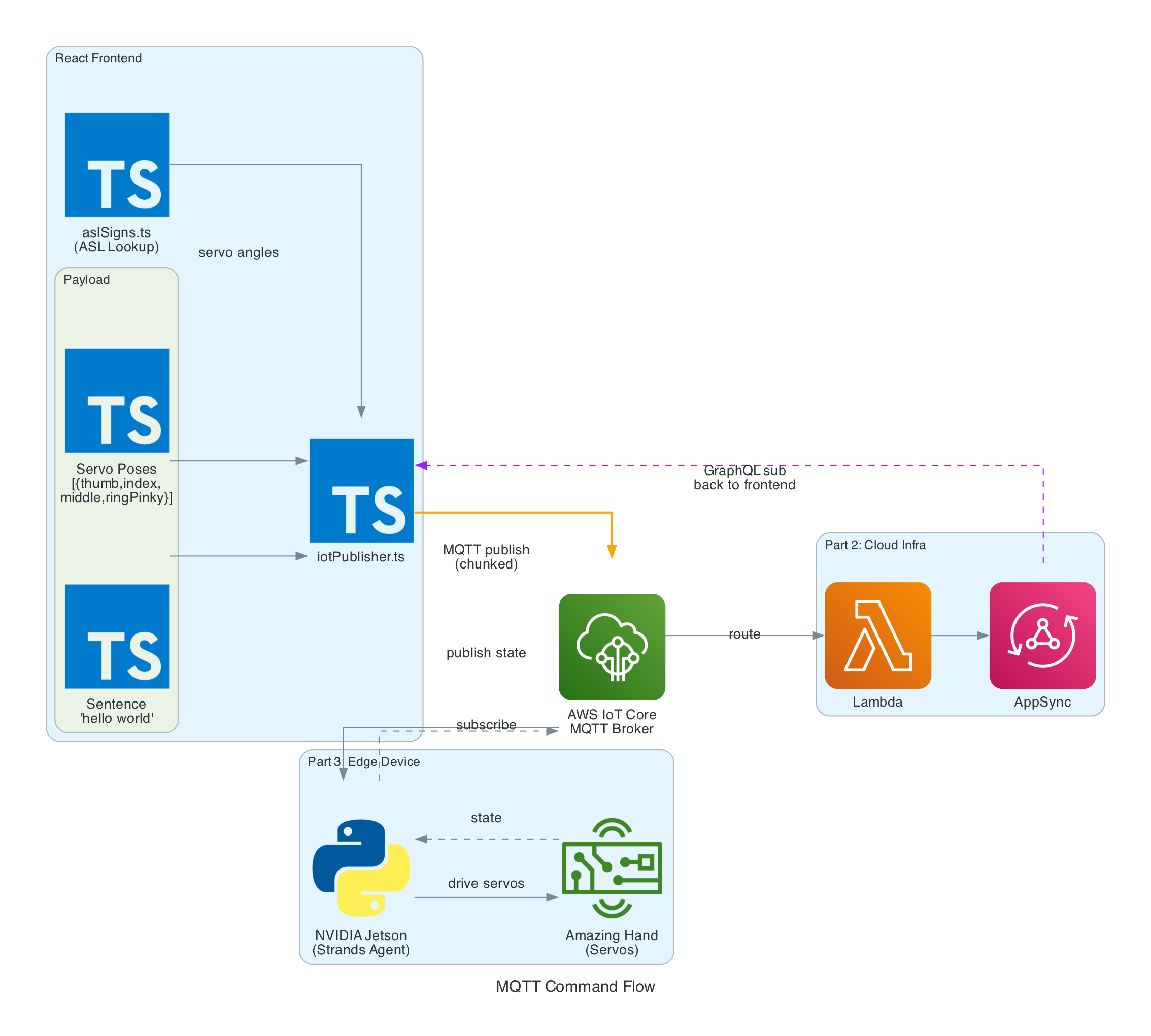

Why MQTT in the middle?

- Decouples the browser from the robot

- AWS IoT Core handles auth, fan-out, retained messages

- Fan-out to the browser via AWS Lambda → AWS AppSync subscriptions

- Edge device can be anywhere on the internet

- Same pattern scales to many hands / many speakers

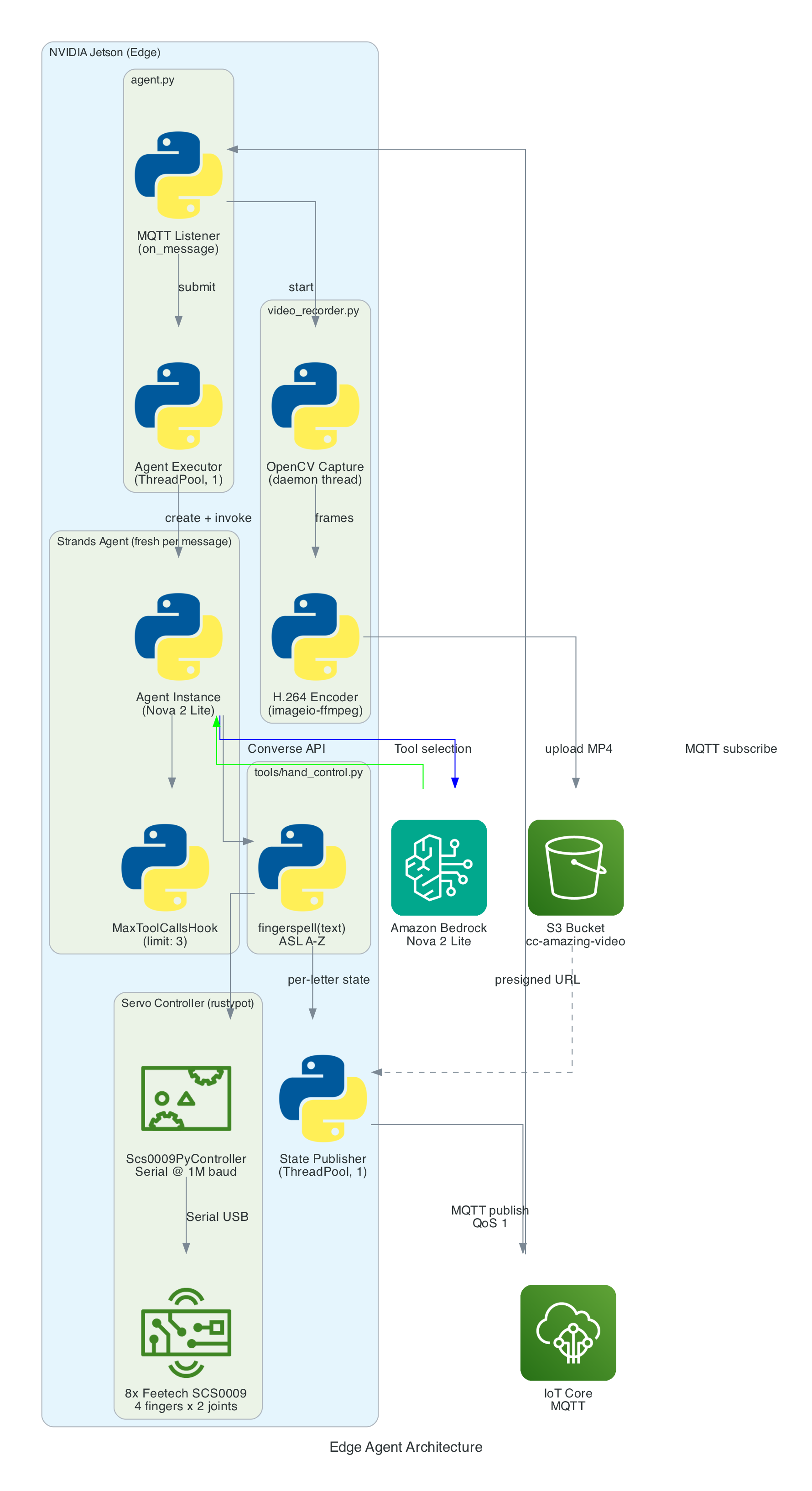

The edge agent (NVIDIA Jetson)

- Strands Agent powered by Amazon Nova 2 Lite (Amazon Bedrock)

- Subscribes to the sentence MQTT topic

- Tools: split sentence → letters → servo poses

- Streams video + state back via AWS IoT Core

The robot hand

- Pollen Robotics Amazing Hand

- Open-source, manufactured by Seeed Studio

- 4 fingers, multiple servos per finger

- Custom calibration per ASL letter

Live demo

🎤 → ☁️ → 🤖 ✋

(Fingers crossed.)

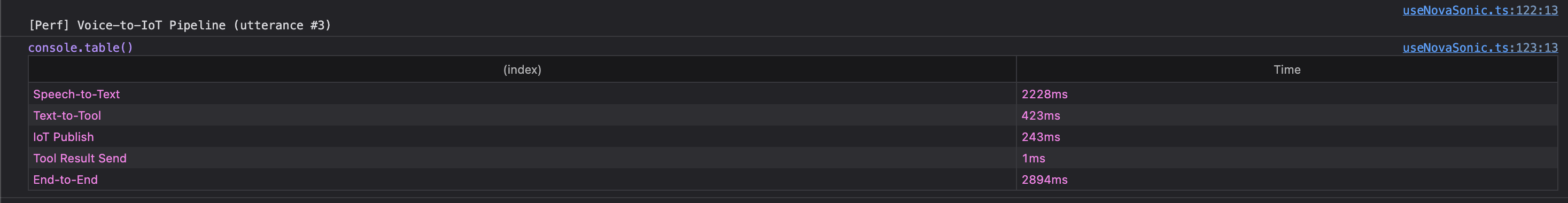

Latency: where the time goes

Measured from New Zealand → AWS us-east-1.

- Browser → Nova 2 Sonic (Bedrock): sub-second to first token

- Nova → MQTT publish: ~tens of ms

- MQTT → Jetson: ~tens of ms

- Letter pose → next letter: paced for legibility, not speed

Bottleneck is physical movement, not the cloud.

What surprised me

- Bedrock's forced tool use is a great "shape the output" trick

- AWS IoT Core + AppSync gives you real-time UI almost for free

- Servo calibration is the hardest part 😅

- Nova 2 Sonic handles accents and code-switching well

What's next

- More signs beyond fingerspelling

- Two-handed signing

- On-device speech for offline mode

- Attach the Amazing Hand to my LeRobot SO-101 arms

- Vision-Language-Action (VLA) models on the Jetson Thor

Thank you! — Questions?

Blog series: Part 1 — Frontend & Voice · Part 2 — Cloud Infrastructure · Part 3 — Edge AI Agent